The Margin Math That Keeps AI Founders Up at Night

Here is a calculation most AI teams run too late. Take a typical enterprise RAG application: 100,000 queries per day, average 1,200 input tokens per query (system prompt plus retrieved context plus user question), and 350 output tokens per response. Running on GPT-4o.

Input cost: 1,200 tokens x $2.50 per million = $0.003 per query Output cost: 350 tokens x $10 per million = $0.0035 per query Total: $0.0065 per query

At 100,000 queries per day: $650 per day. $19,500 per month. $234,000 per year. Just for the LLM calls. Before vector database costs, embedding generation, infrastructure, and support.

If you are charging $99 per user per month and your users generate 50 queries per day, your LLM cost per user is $9.75 per month. That is 9.85% of revenue in a single line item before you count anything else. At scale, that math kills gross margins faster than any other single factor.

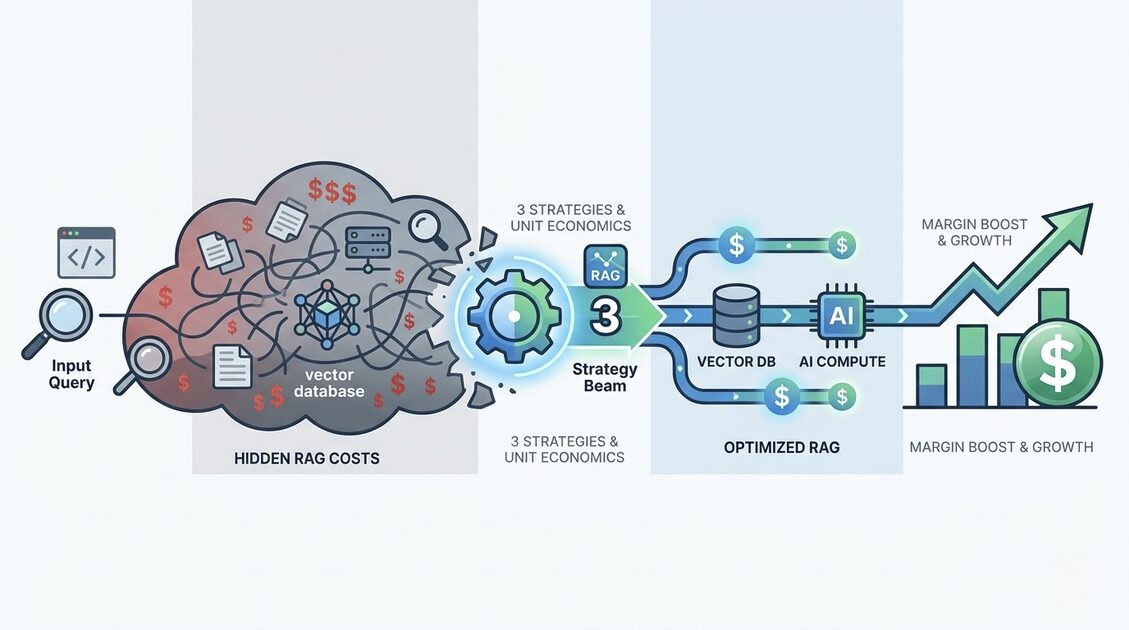

The frustrating part: most of this is completely avoidable. Not through degrading product quality. Through a handful of changes that most teams have never made because nobody told them the specific numbers.

This guide covers those specific numbers, the exact optimizations, and the reasoning behind each one. By the end you will be able to calculate your real cost per query and know precisely where to cut it.

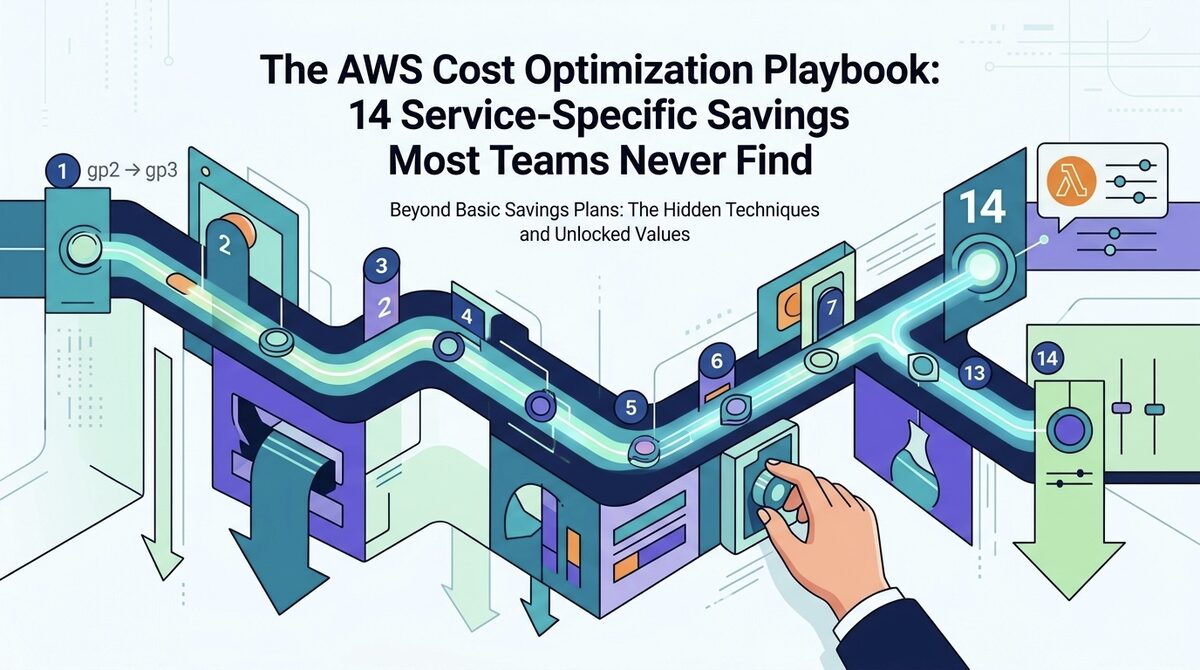

What a RAG Query Actually Costs: The Full Breakdown

Most cost estimates for RAG stop at LLM pricing. The real picture has six components, and teams that only optimize the most obvious one leave the majority of savings on the table.

Component 1: Query embedding

Every user query needs to be embedded before hitting the vector database. Using OpenAI text-embedding-3-small at $0.02 per million tokens, a 20-token query costs $0.0000004. Negligible per query. At 100,000 queries per day, it is $0.04 per day. Stop worrying about query embedding costs.

Component 2: Document corpus embedding

This is the one that surprises teams. If you re-embed your entire document corpus when content changes, and your corpus is 10 million tokens, using text-embedding-3-small costs $0.20 per full run. If your pipeline re-embeds on every document update rather than only on changed documents, and you have 1,000 updates per day across a 1 million document corpus, you could be running near-full re-indexing jobs daily. The fix is content hashing: store a hash of each document alongside its embedding, and only re-embed when the hash changes. This alone reduces corpus embedding costs by 90 to 95% for typical knowledge bases.

Component 3: Vector database reads

Pinecone Serverless at $0.08 per million read units. Each query reads roughly 1 read unit per result returned. If you retrieve 10 results per query: $0.0000008 per query. Also negligible. However, Pinecone pod-based pricing at $69 per month per pod means you are paying for capacity whether queries arrive or not. For applications with variable traffic, the idle pod cost can dominate the actual query cost.

Component 4: LLM input tokens

This is where the real money sits. Here are the current rates for models commonly used in production RAG:

| Model | Input (per million tokens) | Output (per million tokens) | Notes |

|---|---|---|---|

| GPT-4o | $2.50 | $10.00 | With prompt caching: $1.25 input |

| GPT-4o-mini | $0.15 | $0.60 | 17x cheaper input than GPT-4o |

| Claude 3.5 Sonnet | $3.00 | $15.00 | With cache read: $0.30 input |

| Claude 3.5 Haiku | $0.80 | $4.00 | Fast, capable for factual retrieval |

| Claude 3 Opus | $15.00 | $75.00 | Only for highest-complexity tasks |

| Cohere Command R+ | $2.50 | $10.00 | Built-in RAG grounding |

| Llama 3.1 70B (via Groq) | $0.59 | $0.79 | Fast inference, good for routing |

The input-to-output cost ratio is the most important thing to understand here. On GPT-4o, output tokens cost 4x more per token than input tokens. On Claude 3.5 Sonnet, output costs 5x input. Every unnecessary word your LLM generates is disproportionately expensive.

Component 5: LLM output tokens

A response that could be 200 tokens often comes out at 400 to 600 tokens without explicit length guidance in your system prompt. Adding "Respond in under 200 words unless the question requires more detail" to your system prompt is one of the highest-leverage single changes available. Most teams find minimal quality degradation for factual and retrieval-based queries at 200 to 300 token output limits. The cost difference between 600-token and 250-token average outputs is roughly 60% of your total per-query LLM cost.

Component 6: Reranking (the one that catches people off guard)

Cohere Rerank costs $0.002 per search request when reranking 10 documents. That sounds small until you calculate it at scale: 100,000 queries per day at $0.002 each = $200 per day = $6,000 per month for reranking alone. If reranking improves your retrieval quality by 2 to 3% and you are paying $6,000 per month for it, you need to honestly ask whether that improvement is worth the cost. For many applications, reciprocal rank fusion (combining scores from two retrieval methods without a separate reranking call) achieves similar quality at zero incremental cost.

The Prompt Caching Feature Most Teams Have Not Activated

OpenAI and Anthropic both offer prompt caching, and most teams building RAG applications in 2025 and 2026 are not using it.

OpenAI Prompt Caching automatically caches input tokens for prompts longer than 1,024 tokens. Cached tokens are charged at 50% of the regular input price. The caching happens automatically and is reflected in your API response’s usage.prompt_tokens_details field. There is no configuration required. You are either getting it or you are not, and you can check by looking at your API response logs.

For a RAG system with a 500-token static system prompt, retrieved context changes per query (not cached), but the system prompt stays constant. If your system prompt is long enough to trigger caching, you immediately get 50% off those tokens on every request.

Anthropic Prompt Caching is more powerful and requires explicit opt-in. You mark specific parts of your prompt with cache_control headers. Cached tokens cost 10% of regular input price (a 90% discount). For static system prompts and documents that appear in many queries, this is transformative.

# Anthropic prompt caching example

response = client.messages.create(

model="claude-3-5-sonnet-20241022",

max_tokens=1024,

system=[

{

"type": "text",

"text": "You are a helpful assistant for our knowledge base...",

"cache_control": {"type": "ephemeral"}

}

],

messages=[{"role": "user", "content": user_query_with_context}]

)

For a RAG application with a 400-token system prompt running 100,000 queries per day:

- Without caching on Claude 3.5 Sonnet: 400 tokens x $3.00/million x 100,000 = $120/day

- With prompt caching: 400 tokens x $0.30/million x 100,000 = $12/day

- Monthly saving: $3,240/month from one API configuration change

Semantic Caching: The Numbers Behind the 40% Hit Rate Claim

You will read in every RAG optimization guide that semantic caching achieves 40% hit rates. That number is achievable but not guaranteed, and the threshold setting is what determines whether you get there or stay at 10%.

Here is how semantic caching actually works and where teams get it wrong:

Exact match caching (same query string) hits maybe 5% of queries in a diverse application. Not worth building dedicated infrastructure for.

Vector similarity caching (store query embeddings and responses, return cached response for new queries within cosine similarity threshold) is where real hit rates live. But the threshold determines everything:

- Similarity threshold of 0.98: only near-identical queries match. Hit rate: 8 to 12%.

- Similarity threshold of 0.94: semantically equivalent questions match. Hit rate: 25 to 35%.

- Similarity threshold of 0.88: broader matches, but false positives start appearing. Hit rate: 40 to 50%, but 5 to 8% of responses are wrong answers to subtly different questions.

The sweet spot for most enterprise knowledge base applications is 0.91 to 0.94. At this range you get genuine equivalence (questions phrased differently asking the same thing) without false positives from queries that are topically related but specifically different.

The open-source tool GPTCache implements this pattern and takes 3 to 4 hours to integrate. Redis with vector search support (Redis Stack) works for teams already running Redis. For high-query-volume applications, the engineering investment pays back in the first billing cycle.

One important implementation detail most guides omit: cache on the semantic embedding of the query, not on the LLM response. If your LLM generates slightly different responses to the same question on different days (temperature > 0), caching responses directly gives inconsistent experiences. Cache the retrieval result set instead. Same retrieved documents, stable response, coherent user experience.

Model Routing: The Optimization With the Best Return on Engineering Effort

Not every query in your RAG application requires your best and most expensive model. The teams that accept this premise and build around it fundamentally change their cost structure.

The classification is simpler than it sounds. In a typical enterprise knowledge base application, queries fall into identifiable categories:

Tier 1 (Simple retrieval, 40 to 55% of queries): Questions with direct answers in your corpus. "What is our return policy?" "When does the contract expire?" "What are the system requirements?" These queries need accurate retrieval and clean formatting. They do not need multi-step reasoning. GPT-4o-mini or Claude 3.5 Haiku handles them correctly.

Tier 2 (Synthesis and summarization, 30 to 40% of queries): Questions requiring information from multiple documents, comparative analysis, or structured responses. "Summarize the key terms from the three vendor proposals." "Compare our Q3 and Q4 performance metrics." Mid-tier models work well here.

Tier 3 (Complex reasoning, 10 to 20% of queries): Multi-step analysis, inference across ambiguous information, questions where the answer requires the model to reason through contradictions or gaps. Reserve your best model for these.

The routing mechanism does not need to be complex. A keyword-based classifier catches a large fraction of Tier 1 queries. A small fine-tuned classifier (distilbert-base-uncased at 67M parameters runs on a single CPU instance for essentially zero cost) handles the rest. The router itself costs less than $5 per month to run for most applications.

The cost math when 50% of queries route to GPT-4o-mini instead of GPT-4o:

- Original: 100,000 queries/day x $0.0065 = $650/day

- Routed (50% to mini at ~$0.00034 each): $325 + $17 = $342/day

- Monthly saving: $9,240/month

- Annualized: $110,880/year

That is from routing, with no change to product quality for the 50% of queries where a cheaper model is fully sufficient.

Context Window Precision: Why More Retrieved Chunks Is Not Better

A common pattern in early RAG implementations is to retrieve more chunks to improve recall. Retrieve 10 or 15 chunks instead of 3, stuffing the context window with everything that might be relevant and letting the LLM figure it out.

This feels safe but is expensive and often counterproductive.

Here is the cost reality of context window stuffing:

- 3 chunks at 350 tokens each: 1,050 tokens of retrieved context

- 10 chunks at 350 tokens each: 3,500 tokens of retrieved context

- Difference: 2,450 additional input tokens per query

At GPT-4o pricing of $2.50 per million tokens:

- Per query cost difference: $0.006125

- At 100,000 queries per day: $612.50 extra per day = $18,375 extra per month

That is the price you pay for 7 additional chunks per query. The question is whether those 7 extra chunks actually improve your answers. In most well-tuned RAG systems with good semantic chunking and precise retrieval, the answer is: rarely.

The high-precision alternative to context stuffing:

- Retrieve more candidates (20 to 30) but use a lightweight cross-encoder or embedding similarity score to select only the top 3 to 5 most relevant ones before building the LLM context.

- A lightweight reranking step using a locally-hosted model (BGE Reranker v2 runs on CPU) costs essentially nothing and improves the precision of your top-3 selection dramatically.

- Result: 3 high-quality chunks in context, similar or better answer quality, 70% lower input token cost than 10-chunk stuffing.

The teams getting the best cost-quality tradeoffs are not using fewer retrieval resources. They are being more selective about what enters the LLM context.

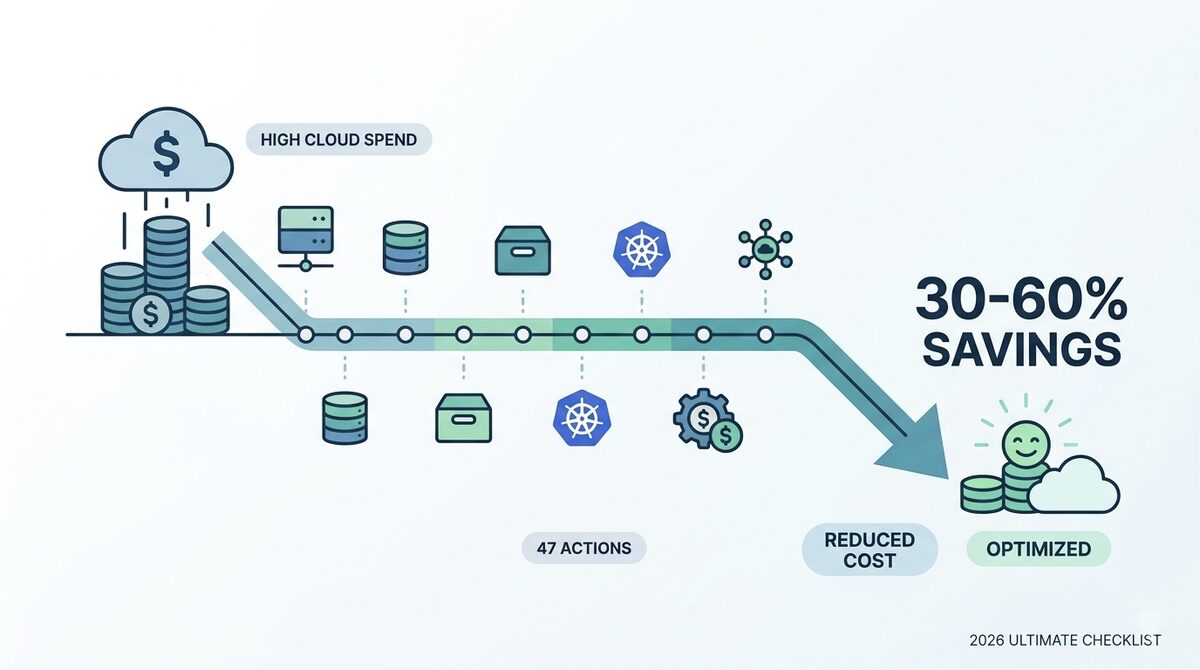

Calculating Your Real Gross Margin Impact

The conversation with investors is ultimately about gross margin. Here is how to translate per-query cost into a gross margin number and how different optimization levels change it.

Take an AI product with the following economics:

- Average revenue per user per month: $149

- Average queries per user per month: 1,500

- Current cost per query (unoptimized, GPT-4o): $0.006

- LLM cost per user per month: $9

At this baseline:

- LLM COGS as % of revenue: 6%

- Add infrastructure (vector DB, compute, storage): roughly 3 to 4%

- Total AI COGS: approximately 9 to 10% of revenue

- If other COGS (support, hosting, etc.) add another 15%: gross margin is approximately 75%

After implementing the optimizations in this guide:

- Model routing (50% Tier 1 to cheap model): cuts LLM cost to roughly $5 per user per month

- Output length control: further reduces to $4.50 per user per month

- Prompt caching on Claude or OpenAI: reduces cached portions by 50 to 90%, saving roughly $0.75 per user per month

- Semantic caching at 30% hit rate: reduces to approximately $3.15 per user per month

Optimized LLM COGS: $3.15 vs original $9. A reduction of 65%.

The gross margin impact: LLM COGS drops from 6% to 2.1% of revenue. Combined with infrastructure savings from vector database optimization (covered in the RAG infrastructure cost guide), total AI COGS can drop from 9 to 10% to 4 to 5% of revenue.

At $1 million ARR, that margin improvement is worth $50,000 to $60,000 per year in recovered gross profit. At $10 million ARR, it is $500,000 to $600,000. The math scales linearly while the engineering investment to achieve it is fixed.

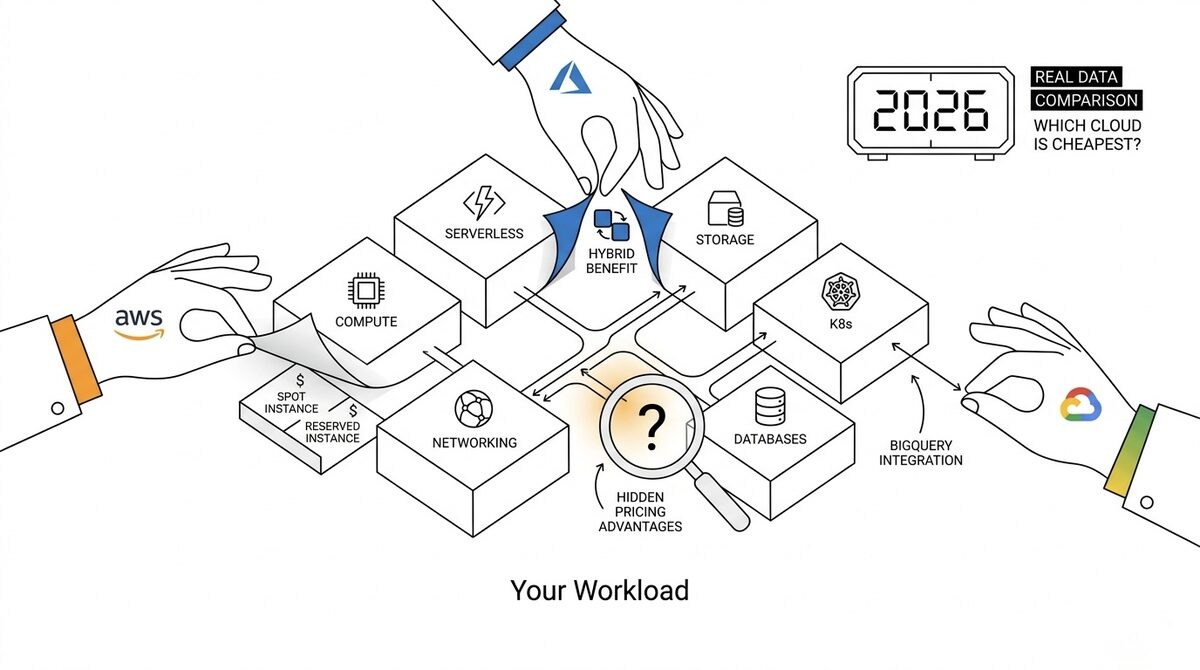

When Self-Hosting Models Makes Economic Sense

Self-hosting large language models is often proposed as the path to eliminating API costs. The reality is nuanced and most teams overestimate the savings.

Here is the cost math for self-hosting Llama 3.1 70B on AWS:

- Instance: g5.12xlarge (4x A10G GPU, 48 vCPU): $5.672/hour

- Throughput on Llama 3.1 70B with vLLM: approximately 2,000 to 4,000 tokens/second at batch size 8-16

- Cost per million tokens at 3,000 tokens/second: $5.672 / (3,000 x 3,600 / 1,000,000) = $0.526/million tokens

Compare to:

- GPT-4o-mini: $0.15 input + $0.60 output (average ~$0.375/million)

- Claude 3.5 Haiku: $0.80 input + $4.00 output (average ~$2.40/million)

- Cohere Command R: $0.50 input + $1.50 output (average ~$1.00/million)

Self-hosting Llama 3.1 70B is cheaper than Claude 3.5 Haiku and Command R for mixed input/output workloads, but more expensive than GPT-4o-mini on a pure cost-per-token basis. The breakeven analysis needs to include:

- MLOps engineering overhead: typically 0.25 to 0.5 FTE to maintain a self-hosted LLM serving stack

- GPU availability: g5.12xlarge Spot interruptions are infrequent but require handling

- Throughput scaling: self-hosted throughput scales linearly with cost; API throughput scales with demand at no additional cost

Self-hosting makes clear economic sense when:

- Your queries are highly domain-specific and a fine-tuned open model outperforms general API models at your task

- You need data sovereignty requirements that prevent sending data to third-party APIs

- Your query volume is high and predictable enough to sustain 70%+ GPU utilization (below that, idle GPU cost erodes the savings)

- You are running a model in the 8B to 13B range where a single A10G instance handles meaningful throughput at approximately $0.52/million tokens, well below any API alternative

The FinOps Operating Model for RAG Applications

Technical optimization delivers one-time savings. An operating model delivers compounding savings as your application grows.

The FinOps practices that matter specifically for RAG unit economics are different from general cloud FinOps. Here is what works:

Instrument everything from day one. The OpenAI API, Anthropic API, and Cohere API all return token counts in every response. Log them. Not to a spreadsheet. To your observability stack (Datadog, Grafana, whatever you use). Set up a cost dashboard that shows:

- Average input tokens per query (by endpoint, by user tier, by feature)

- Average output tokens per query

- API cost per query trended over time

- Cache hit rate if you have semantic caching

Set cost-per-query SLOs alongside latency SLOs. Most teams set p95 latency targets. Almost no teams set cost targets. Specify that a given RAG endpoint should cost under $0.004 per query at p95. When a model update or prompt change pushes costs above that threshold, it triggers the same review process as a latency regression.

Review token usage in every major feature release. New features often add system prompt instructions, additional retrieved context, or output format requirements that inflate token counts. Without a pre/post comparison, these additions accumulate invisibly. Track average tokens per query as a deployment metric alongside response times and error rates.

For teams that want structured FinOps governance applied to AI applications, FinOps consulting provides the frameworks to make this systematic rather than reactive.

The cloud operations practices around tagging and cost allocation extend directly to LLM workloads: tag your Lambda functions, containers, and API gateways by the RAG feature they serve, and you can allocate API costs by feature rather than seeing a single opaque line item in your OpenAI billing dashboard.

The Compounding Advantage of Getting RAG Costs Right Early

The difference between an AI product that scales profitably and one that hits a gross margin wall at Series B is rarely product quality. It is almost always unit economics.

The optimizations in this guide are not complex engineering projects. Prompt caching is a configuration change. Output length guidance is a single sentence in your system prompt. Model routing with a keyword classifier is a one-sprint implementation. Semantic caching with GPTCache is a half-day integration. Together, they can reduce your RAG cost per query by 60 to 70% without your users noticing any change in the product.

What changes is your gross margin, your FinOps story for investors, and your ability to price competitively without subsidizing customer usage.

For teams scaling RAG applications and wanting structured support across both the infrastructure and the AI cost layers, the Kubernetes cost optimization guide covers the GPU and compute side, and the cloud cost monitoring tools guide covers how to make this spending visible at the granularity needed to manage it.

Cloud cost optimization and FinOps consulting from LeanOps brings the operating model and governance layer that turns these one-time optimizations into a sustainable cost discipline as your AI product scales.

External resources: OpenAI pricing and token calculator, Anthropic prompt caching documentation, and GPTCache open source project for semantic caching implementation.