The RAG Bill Nobody Budgeted For

Let me share something that catches almost every engineering team off guard: the most expensive part of your RAG system is almost certainly not the vector database.

Most teams budget carefully for Pinecone, Weaviate, or Qdrant. They compare pod pricing, calculate storage costs, and feel confident about the numbers. Then the bill arrives and it is 3x what they projected.

What happened? The embedding generation costs, the reranking model calls, the re-indexing jobs that run every time someone tweaks a chunking parameter, and the LLM API calls for failed retrievals all sit outside the vector database line item. Together they account for 60 to 70% of total RAG infrastructure cost for most production deployments.

This guide covers the full RAG cost stack, not just the database. By the time you finish reading, you will know where your money is actually going, which optimizations deliver real savings, and which popular advice actually costs you more than it saves.

Why RAG Costs Explode in the First Year

Here is a realistic breakdown of what drives RAG costs at scale, ranked by what surprises teams most:

1. Embedding generation is often the largest single line item

If you are using OpenAI's text-embedding-3-large (the default that many tutorials recommend), you are paying $0.13 per million tokens. text-embedding-3-small costs $0.02 per million tokens, and in most RAG applications, the retrieval quality difference is negligible.

For a corpus of 100 million tokens, the difference between these two models is $11 per full re-embedding run. That sounds small until you realize that unstable chunking strategies, schema changes, or model switches cause multiple re-indexing runs per month. Teams that re-index weekly on text-embedding-3-large pay $572 per year just on embedding costs for that one corpus.

2. HNSW indexes consume far more RAM than teams expect

The HNSW algorithm is the standard for approximate nearest neighbor search. It is fast and accurate. It is also memory-hungry in a way the documentation does not make obvious.

Here is the math that matters: a corpus of 1 million vectors at OpenAI's 1536-dimensional size requires roughly 6 GB of raw storage. The HNSW index built on top adds 1.5 to 2x overhead. Total: 9 to 12 GB of RAM just to serve that index. At 5 million vectors, you are looking at 45 to 60 GB of RAM before query overhead.

This is why teams expecting a $200/month instance end up on a $1,200/month instance. The vectors fit on disk. The HNSW index does not.

3. Chunking strategy directly multiplies your vector count and your bill

This one is free to fix, and almost nobody fixes it before it becomes expensive. Naive fixed-size chunking (512 tokens, overlap 50, done) creates 3 to 5x more vectors than semantic chunking of the same corpus. More vectors mean more storage, more RAM for your index, more compute per query, and higher managed service costs.

Semantic chunking groups content by meaning rather than by character count. A paragraph about AWS pricing stays together rather than being split mid-sentence across two chunks. Retrieval quality improves and vector count drops by 40 to 60%. Both outcomes reduce costs, simultaneously.

4. Query compute scales with your ef_search parameter setting

In HNSW, the ef_search parameter controls how many candidates the algorithm considers before returning results. The defaults in most libraries are set high for accuracy:

- ef_search = 200: roughly 99% recall, high compute per query

- ef_search = 100: roughly 97% recall, 40% less compute

- ef_search = 50: roughly 95% recall, 60 to 70% less compute

For most RAG applications, the difference between 95% and 99% recall is invisible to end users. The difference in query cost at 10 million queries per month is not invisible at all.

5. Multi-tenant architectures multiply base costs regardless of data size

Many B2B SaaS products build one vector index per customer. With 50 customers, you have 50 indexes. With 200 customers, you have 200 indexes. Each index carries base overhead: memory reservation, replication configuration, background maintenance tasks.

The fix: use namespaces (Pinecone) or collections with metadata filtering (Qdrant) to isolate tenant data within a shared index structure. This architectural shift alone can reduce per-tenant infrastructure costs by 80 to 90% without compromising data isolation in any way.

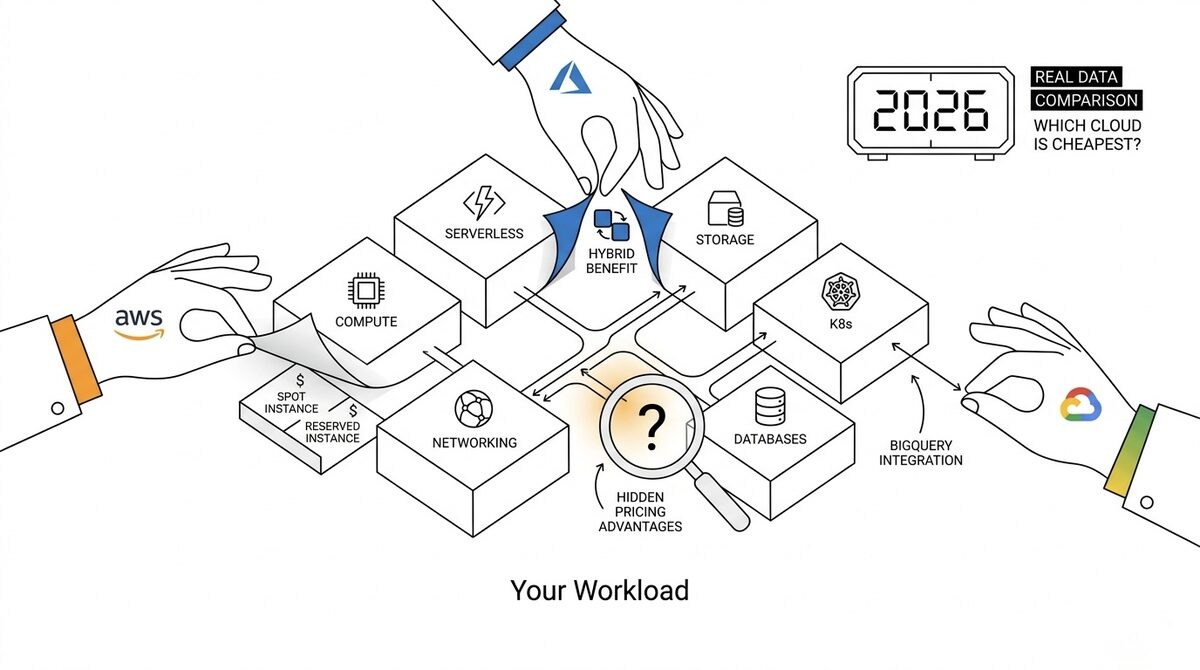

The Honest Vector Database Cost Comparison

Most vector database comparisons talk about features. This one talks about cost thresholds, because the right database depends almost entirely on your query volume and corpus size.

| Database | Best For | Cost Reality |

|---|---|---|

| pgvector (PostgreSQL extension) | Under 2M vectors, existing PostgreSQL stack | Near-zero incremental cost; runs on your existing RDS or Aurora instance |

| Qdrant self-hosted | 2M to 50M vectors, cost-sensitive teams | Open source, scalar quantization cuts memory by 4x, $0 licensing |

| Pinecone Serverless | Prototyping, variable and unpredictable traffic | $0.033/GB/month storage + $0.08/million read units; expensive at high query volumes |

| Pinecone Pod-based | Production with predictable high-volume queries | p1 pod at roughly $69/month minimum; cheaper than Serverless above ~10M queries/month |

| Weaviate Cloud | Hybrid search (keyword + vector), complex filtering | $0.145/million requests above free tier; better for BM25 plus vector workloads |

| OpenSearch with k-NN | AWS-heavy stacks, teams already on OpenSearch | Pay for the OpenSearch cluster you already have; no additional licensing cost |

The insight that saves the most money: for teams in early stages with under 2 million vectors, pgvector running on an existing PostgreSQL instance costs under $10/month in incremental storage and compute. The operational complexity of a dedicated vector database is simply not justified at that scale. Start with pgvector and migrate when you hit its limits. Most teams hit those limits much later than they expect.

10 RAG Cost Optimizations That Actually Work

1. Reduce Embedding Dimensions Before You Scale

OpenAI's text-embedding-3-small and text-embedding-3-large both support Matryoshka Representation Learning, which means you can truncate dimensions without retraining the model. Going from 1536 to 512 dimensions reduces storage by 66% and query compute proportionally.

The quality tradeoff is real but manageable. For most domain-specific RAG applications with focused corpora, 512-dimensional embeddings perform within 2 to 3% of full-dimension embeddings on standard retrieval benchmarks. That 2% quality gap costs you a significant fraction of your entire infrastructure bill. Run the benchmark on your actual data before deciding.

2. Build an Embedding Cache for Repeat Queries

When users ask similar questions, they generate similar query embeddings. If two queries have cosine similarity above 0.97, they are functionally identical for retrieval purposes. A Redis cache with a similarity check at query time can cut embedding API calls by 30 to 70% depending on your application's query patterns.

The implementation is straightforward: embed the incoming query, check the cache for nearby vectors, return the cached retrieval results if similarity exceeds your threshold. This is not semantic caching of LLM responses (which has cache invalidation problems). It is caching query vectors, which are stable by nature. This optimization is almost never implemented in early-stage RAG systems but has major cost impact at scale.

3. Tune ef_search to Your Actual Recall Requirements

Run a recall benchmark on a sample of your real queries at ef_search values of 50, 100, and 200. In most domain-specific RAG applications, ef_search = 50 to 75 gives you 94 to 96% recall on actual user questions. That is indistinguishable from ef_search = 200 in production usage.

Reduce ef_search to the minimum value where retrieval quality meets your application requirements. This single change reduces per-query compute by 40 to 70% with no infrastructure change and no user experience impact. It is genuinely one of the highest-leverage optimizations available and almost no tutorial mentions it.

4. Implement a Two-Tier Retrieval Architecture

Not all documents in a RAG corpus are queried equally. In most enterprise knowledge bases, 20% of documents receive 80% of retrieval requests. The remaining 80% sit in your index consuming RAM and contributing to index overhead around the clock.

Build a two-tier system: a hot index with recent and frequently retrieved documents on your primary vector database, and a cold store with a lighter-weight index on a smaller instance for archival content. Most queries never touch the cold tier, but it is there when needed. Storage and memory costs on the hot tier drop by 40 to 60%.

5. Stabilize Your Chunking Strategy Before Indexing at Scale

Every time you change your chunking parameters, you need to re-embed and re-index your entire corpus. This is expensive and time-consuming, and it is almost always avoidable with proper upfront planning.

The mistake teams make: they optimize chunking in production. They change overlap from 50 to 100 tokens, see better retrieval, redeploy. Then change it again a week later. Each iteration costs a full embedding API run across the entire corpus.

Do your chunking experiments on a sample of 10,000 to 50,000 documents, which is usually statistically representative. Lock the parameters before indexing at scale. A stable chunking strategy saves both embedding API costs and engineering time.

6. Enable Scalar Quantization on Memory-Heavy Indexes

Qdrant, Weaviate, and newer versions of pgvector all support scalar quantization, which compresses 32-bit floating point vectors to 8-bit integers. This reduces memory consumption by approximately 4x with a typical quality loss of 2 to 5% on retrieval benchmarks.

For a 10 million vector corpus requiring 60 GB of RAM with full precision HNSW, scalar quantization brings that down to approximately 15 GB. The difference between a 64 GB instance and a 16 GB instance is often $400 to $600 per month on AWS or GCP. For self-hosted Qdrant, this is a single configuration flag.

7. Batch Your Embedding Requests to the Maximum Allowed Size

The OpenAI embeddings API supports batches of up to 2,048 inputs per request. Many teams process documents one at a time in a loop, which means 2,048 separate API calls instead of 1. Beyond the higher latency, this creates inefficient throughput and hits rate limits faster.

Batch your embedding pipeline to send the maximum allowed inputs per request. For teams running nightly re-indexing jobs, this change alone can reduce embedding job duration by 80 to 90%, which directly reduces the compute cost of the worker running the job and the time window where it holds resources.

8. Benchmark Reranking Before Making It Permanent

Cross-encoder reranking models (like Cohere Rerank or a self-hosted BGE Reranker) retrieve a large candidate set from your vector database and then run a more expensive model to re-score and filter the results. The idea is to improve precision on the final answer context.

The cost reality: reranking adds latency and cost to every single query. For well-configured indexes with tight chunking and appropriate ef_search values, reranking often improves precision by less than 5% on domain-specific corpora. That 5% may not justify 30 to 40% higher per-query cost.

Benchmark reranking on your actual queries before making it a permanent part of your pipeline. If retrieval quality is already meeting your requirements without it, reranking is expensive insurance against a problem you do not have.

9. Delete Orphaned Indexes on a Monthly Cadence

This sounds obvious. It is not done often enough. Most vector database deployments accumulate indexes from experiments, prototypes, and deprecated features. These indexes sit in memory and storage permanently, contributing to your bill with zero query traffic.

Implement an index usage log that records the last query timestamp for each index or collection. Set a policy: any index with no queries in 30 days gets archived to cold storage or deleted. For managed services like Pinecone, orphaned pod indexes at $69/month each add up faster than teams realize. Running a monthly orphan audit typically surfaces 10 to 20% of total vector database spend as immediately recoverable waste.

10. Use Namespace Isolation Instead of Separate Indexes for Multi-Tenant Systems

If your RAG system serves multiple tenants, the architecture decision between one-index-per-tenant and shared-index-with-namespace is a cost multiplier, not just a design preference.

One index per tenant: each carries its own memory overhead, replication factor, and base resource allocation. At 50 tenants, this means 50x the base infrastructure cost regardless of how small each tenant's corpus is.

Shared index with namespace isolation: all tenants share infrastructure resources, with metadata filters ensuring data isolation. Memory footprint is proportional to total vector count, not tenant count. For a typical B2B SaaS product, this architecture is 80 to 90% cheaper at scale with no compromise on tenant data isolation.

The Full RAG Cost Stack: Where the Money Actually Goes

Understanding the complete cost picture is what separates teams that manage RAG costs effectively from teams that are perpetually surprised by their bills.

| Cost Layer | What It Covers | How to Control It |

|---|---|---|

| Embedding generation | API calls to create vectors from documents and queries | Batch requests, smaller dimensions, query embedding cache |

| Vector database storage | Holding your index on disk | Tiered storage, archiving cold documents, lifecycle policies |

| Vector database memory | HNSW index in RAM | Scalar quantization, dimension reduction, hot/cold architecture |

| Query compute | CPU consumed per similarity search | ef_search tuning, index size reduction via better chunking |

| Reranking | Cross-encoder re-scoring of retrieved candidates | Benchmark before adopting; often adds cost without proportional quality gain |

| Re-indexing jobs | Full corpus re-embedding when pipeline changes | Stabilize chunking strategy; use incremental indexing for changed documents only |

Teams that achieve sustainable RAG costs look at all six layers together. Optimizing one layer in isolation often pushes cost pressure onto another layer that was previously controlled. You need the full picture.

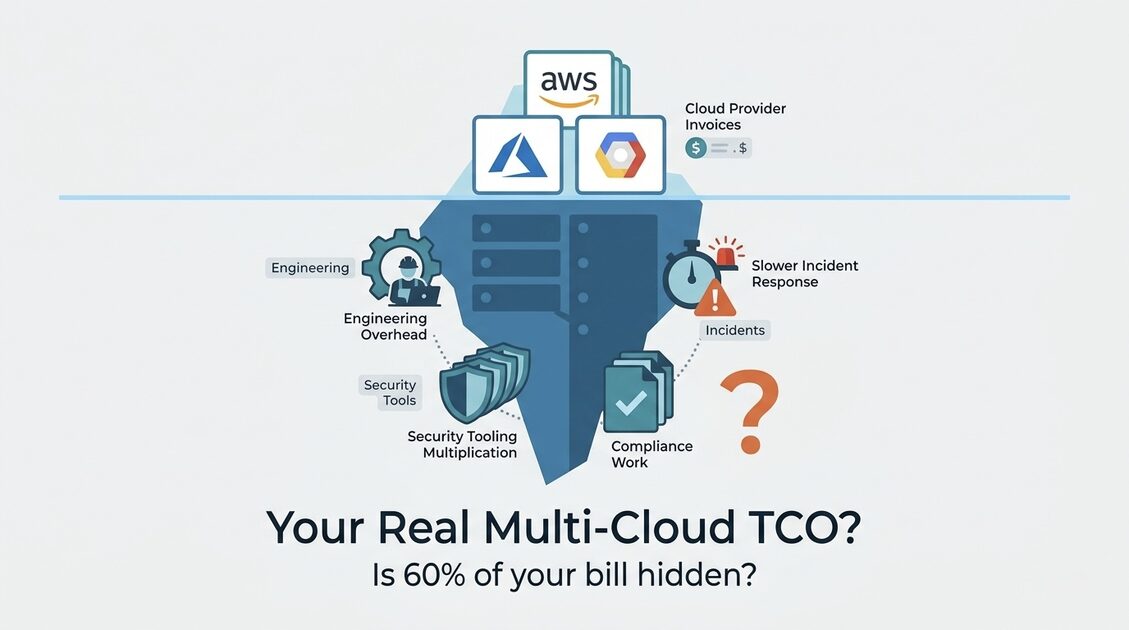

Applying FinOps Principles to RAG Infrastructure

The technical optimizations above only deliver lasting value if your organization has visibility into costs at the workload level. This is where FinOps consulting integrates with RAG infrastructure: tagging, allocation, and accountability structures that make it possible to understand cost per AI feature, per customer, or per model version.

The tagging strategy that matters for RAG:

Every vector database cluster, every embedding pipeline job, and every reranking endpoint should be tagged with at minimum: the application it serves, the team that owns it, the environment (production, staging, development), and the embedding model version it uses. This level of attribution is what enables the weekly cost reviews that actually change behavior.

For teams running AI workloads alongside more traditional cloud infrastructure, the cloud operations practices that govern compute and storage extend naturally to vector databases with some adjustments for the unique memory and query patterns of this workload type.

The FinOps Foundation has published guidance specifically on ML and AI cost patterns that is worth reviewing alongside your team's actual usage data, particularly their framework for separating training costs, inference costs, and data pipeline costs as distinct line items.

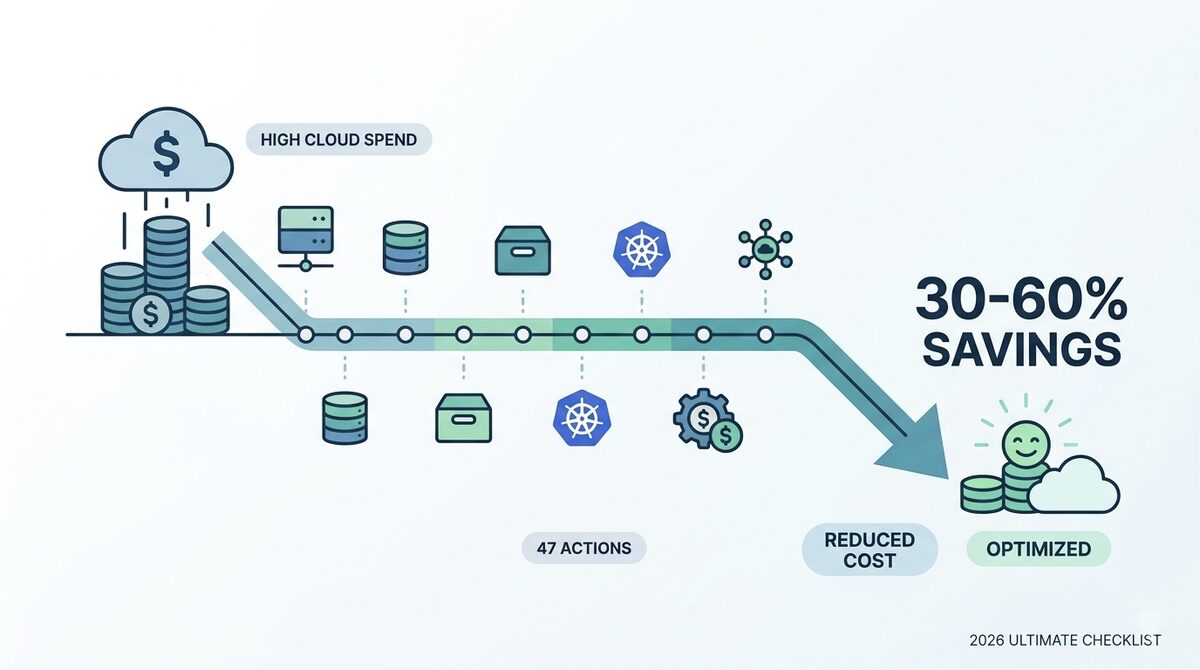

The Compound Return on Getting This Right

RAG is not a "set it and forget it" infrastructure investment. It is a system with compounding costs and compounding returns depending on how rigorously you manage it.

The teams that keep RAG costs sustainable long-term share a few practices: they track cost at the feature or tenant level rather than just the cloud account level, they treat re-indexing as a meaningful engineering event with a measurable cost rather than a routine cron job, and they benchmark ef_search and embedding dimensions against their actual retrieval quality requirements rather than accepting the library defaults.

The three highest-leverage changes to make first: audit your embedding dimensions and run the quality benchmark, stabilize your chunking strategy and stop iterating on it in production, and tune ef_search on your production query sample. Most teams that apply these three changes report 30 to 50% reductions in monthly RAG infrastructure costs without touching the database architecture at all. That is real money recovered, not theoretical savings on a roadmap.

For organizations building AI infrastructure at scale and needing structured cost governance alongside technical optimization, the AWS cost optimization practices that apply to general infrastructure extend directly to the compute and storage layers of RAG deployments.

Related reading: the real-time cloud cost monitoring tools guide covers how to apply anomaly detection to AI workload costs, and the cloud cost optimization for modern infrastructure guide addresses serverless and container cost patterns that often run alongside RAG pipelines.

External resources: FinOps Foundation on ML and AI cost patterns, Qdrant quantization documentation, and pgvector HNSW implementation notes on GitHub.