The Per-GB Price Is Irrelevant at 500TB. Total Cost of Ownership Is Everything.

When your storage footprint reaches 500TB of unstructured data, the pricing dynamics fundamentally change. The difference between $0.006/GB and $0.023/GB is not theoretical: it is $8,500 per month, $102,000 per year. At this scale, choosing the wrong provider or the wrong storage tier costs more than a senior engineer's salary.

But the per-GB rate is only the beginning. At 500TB, egress fees, retrieval costs, API operations, and replication charges can double or triple your effective storage bill. We have modeled the true total cost of ownership for 500TB of unstructured data (engineering CAD files, video surveillance archives, research datasets, media libraries) across every major cloud provider in 2026.

The answer is not "use the cheapest per-GB option." The answer depends on how often you access the data, how much you retrieve at once, and whether you need geographic redundancy.

Provider-by-Provider Storage Cost at 500TB

All prices are USD as of May 2026. Storage costs only (before egress, API, and retrieval fees).

| Provider | Tier | Cost/GB/Month | 500TB Monthly | Annual |

|---|---|---|---|---|

| AWS S3 Standard | Hot | $0.023 | $11,500 | $138,000 |

| AWS S3 Infrequent Access | Warm | $0.0125 | $6,250 | $75,000 |

| AWS S3 Glacier Instant Retrieval | Cold (ms access) | $0.004 | $2,000 | $24,000 |

| AWS S3 Glacier Deep Archive | Archive | $0.00099 | $495 | $5,940 |

| Google Cloud Storage Standard | Hot | $0.020 | $10,000 | $120,000 |

| Google Cloud Storage Coldline | Cold | $0.004 | $2,000 | $24,000 |

| Google Cloud Storage Archive | Archive | $0.0012 | $600 | $7,200 |

| Azure Blob Hot | Hot | $0.0184 | $9,200 | $110,400 |

| Azure Blob Cool | Warm | $0.010 | $5,000 | $60,000 |

| Azure Blob Archive | Archive | $0.00099 | $495 | $5,940 |

| Cloudflare R2 | Single tier | $0.015 | $7,500 | $90,000 |

| Wasabi | Single tier | $0.0069 | $3,450 | $41,400 |

| Backblaze B2 | Single tier | $0.006 | $3,000 | $36,000 |

The spread between cheapest and most expensive active storage is nearly 4x ($3,000 vs $11,500/month). But this table is dangerously incomplete. It does not include the costs that actually determine your bill at scale.

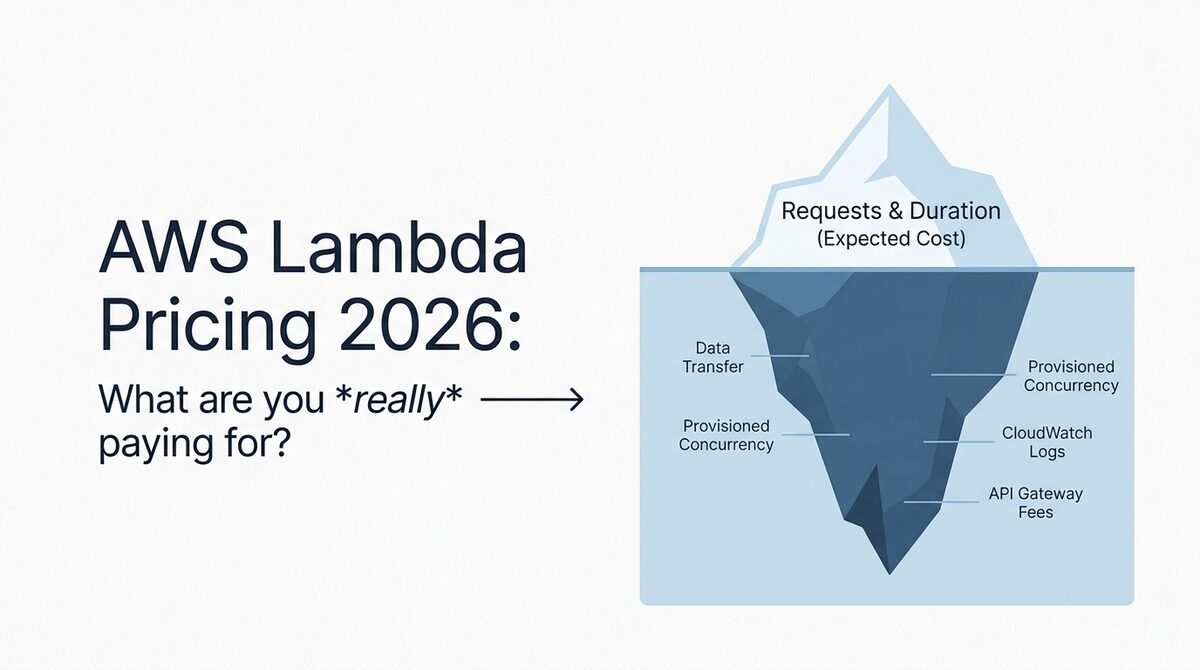

The Hidden Costs That Change Everything at 500TB

Egress (Data Transfer Out)

Egress is where enterprise storage bills explode. If you access 10% of your 500TB per month (50TB of retrievals), here is what egress alone costs:

| Provider | Egress Rate | 50TB Monthly Egress | Annual Egress Cost |

|---|---|---|---|

| AWS S3 | $0.09/GB (first 10TB), tiered | $4,180 | $50,160 |

| Google Cloud Storage | $0.12/GB (first 1TB), tiered | $4,750 | $57,000 |

| Azure Blob | $0.087/GB (first 10TB), tiered | $3,870 | $46,440 |

| Cloudflare R2 | $0.00 | $0 | $0 |

| Wasabi | $0.00 (within policy) | $0 | $0 |

| Backblaze B2 | $0.01/GB (or free via CF) | $0-500 | $0-6,000 |

Read that table again. AWS egress for 50TB/month ($4,180) exceeds the entire storage cost on Wasabi ($3,450). For workloads with even moderate access patterns, egress is not a line item. It is the majority of your bill on hyperscaler providers.

API Operation Costs

At 500TB of unstructured data (assuming 500 million objects averaging 1MB each), routine operations add up:

| Operation | AWS S3 | GCS | R2 | Wasabi |

|---|---|---|---|---|

| Daily LIST (inventory) | $25/day | $25/day | $2.50/day | $0 |

| Monthly GET (10% access) | $200 | $200 | $18 | $0 |

| Monthly PUT (1% new/update) | $25 | $25 | $22.50 | $0 |

| Monthly DELETE (0.5%) | $0 | $0 | free | $0 |

| Monthly API Total | $975 | $975 | $115 | $0 |

Wasabi's zero API cost is a genuine differentiator at this scale. Organizations running frequent inventory scans, metadata queries, or backup verification against 500M+ objects save $10,000+/year on API fees alone.

Minimum Retention and Early Deletion

For unstructured data that might be reorganized, migrated, or partially deleted:

| Provider/Tier | Minimum Retention | Penalty at 500TB (early delete at 30 days) |

|---|---|---|

| Wasabi | 90 days | $6,900 (pay for 60 additional days) |

| S3 Glacier IR | 90 days | $4,000 |

| S3 Glacier Flexible | 90 days | $3,600 |

| S3 Glacier Deep Archive | 180 days | $1,485 (for remaining 150 days) |

| GCS Coldline | 90 days | $4,000 |

| GCS Archive | 365 days | $5,500 |

If your workflow involves periodic data cleanup or reorganization, these retention penalties are a real cost. For CAD file archives where old revisions are regularly pruned, factor this in.

Full TCO Modeling: 500TB Unstructured Data

Scenario 1: Engineering CAD Files (Active Access, 10% Monthly Retrieval)

500TB of CAD files, 50TB downloaded per month by engineering teams, 5% monthly uploads (new revisions).

| Provider | Storage | Egress | API Ops | Replication (1x) | Monthly TCO | Annual TCO |

|---|---|---|---|---|---|---|

| AWS S3 Standard | $11,500 | $4,180 | $975 | $11,500 | $28,155 | $337,860 |

| AWS S3 IA | $6,250 | $4,180 | $975 | $6,250 | $17,655 | $211,860 |

| GCS Standard | $10,000 | $4,750 | $975 | $10,000 | $25,725 | $308,700 |

| Cloudflare R2 | $7,500 | $0 | $115 | N/A (multi-region) | $7,615 | $91,380 |

| Wasabi | $3,450 | $0 | $0 | $3,450 | $6,900 | $82,800 |

| Backblaze B2 + CF | $3,000 | $0 | $50 | N/A | $3,050 | $36,600 |

For active CAD file storage with regular access, Backblaze B2 via Cloudflare is 9x cheaper than AWS S3 Standard. Even without the Cloudflare CDN trick, Wasabi saves $255,000/year compared to S3.

Scenario 2: Video Surveillance Archive (Rare Access, 1% Monthly Retrieval)

500TB of video footage retained for compliance, accessed only during investigations (roughly 5TB/month).

| Provider | Storage | Egress | API Ops | Monthly TCO | Annual TCO |

|---|---|---|---|---|---|

| AWS S3 Glacier IR | $2,000 | $450 | $100 | $2,550 | $30,600 |

| AWS S3 Glacier Deep | $495 | $450 | $100 | $1,045 | $12,540 |

| GCS Archive | $600 | $600 | $100 | $1,300 | $15,600 |

| Azure Archive | $495 | $435 | $80 | $1,010 | $12,120 |

| Wasabi | $3,450 | $0 | $0 | $3,450 | $41,400 |

| Backblaze B2 + CF | $3,000 | $0 | $50 | $3,050 | $36,600 |

For rarely accessed compliance data, archival tiers from AWS, GCP, and Azure are 2-3x cheaper than Wasabi or B2. The per-GB rates at the archival tier ($0.001/GB) simply cannot be matched by single-tier providers. Wasabi's $3,450/month for rarely accessed data is not competitive against Glacier Deep Archive's $495/month.

The tradeoff: if you ever need a full restore from Glacier Deep Archive, the retrieval bill ($5,000+) wipes out 5 months of savings over Wasabi. For surveillance data accessed during active investigations (unpredictable, potentially large retrieval), Glacier Instant Retrieval at $2,000/month offers the best balance.

Scenario 3: Research Data Lake (Growing, Mixed Access Patterns)

500TB today growing 10% annually, with 30% hot (daily access), 40% warm (weekly), 30% cold (monthly or less).

| Strategy | Implementation | Monthly TCO | Annual TCO |

|---|---|---|---|

| Single tier (S3 Standard) | All on S3 Standard | $28,000+ | $336,000+ |

| S3 Intelligent-Tiering | Auto-tiering (no retrieval fees) | $8,500-12,000 | $102,000-144,000 |

| Multi-provider tiered | Hot: R2 (150TB), Warm: Wasabi (200TB), Cold: Glacier IR (150TB) | $4,680 | $56,160 |

| Hybrid (on-prem + cloud) | Hot: on-prem NAS, Cold: Glacier DA | $3,500-5,000 | $42,000-60,000 |

The multi-provider tiered approach is 6x cheaper than naive S3 Standard, and even 2x cheaper than S3 Intelligent-Tiering. The operational complexity is the cost: you need lifecycle management, data movement automation, and unified access tooling (rclone, MinIO gateway, or a custom abstraction layer).

Decision Framework: Choosing the Right Strategy for 500TB

Use Wasabi When:

- Data is warm (accessed weekly to monthly)

- Egress is within the stored-amount policy (50TB egress on 500TB stored is fine)

- Retention exceeds 90 days (no early deletion penalties)

- You want predictable billing with no surprise egress charges

- Best for: CAD file libraries, media archives, backup repositories

Use Backblaze B2 + Cloudflare When:

- You need the absolute lowest per-GB rate for active data

- Egress needs are high (free via Cloudflare CDN partnership)

- You can tolerate the Cloudflare integration requirement

- Best for: Content delivery, public datasets, media streaming origins

Use AWS S3 Glacier Deep Archive When:

- Data is truly archival (accessed less than once per year)

- You can wait 12+ hours for retrieval

- Compliance requires proven 11-nines durability

- Best for: Legal retention, compliance archives, disaster recovery copies

Use Cloudflare R2 When:

- Access patterns are unpredictable or high-egress

- You need S3-compatible APIs without egress surprises

- Multi-region availability matters (R2 is distributed by design)

- Best for: SaaS platforms serving user-uploaded content, AI training datasets accessed from multiple regions

Use S3 Intelligent-Tiering When:

- Access patterns are genuinely unpredictable

- You want zero operational overhead (no lifecycle rules to manage)

- You are already in the AWS ecosystem

- Best for: Data lakes with mixed query patterns, growing datasets where access frequency declines over time

The Multi-Provider Strategy That Saves 70%

For most enterprises with 500TB+ of unstructured data, no single provider is optimal for all access patterns. The cost-optimal strategy combines providers based on data temperature:

Step 1: Classify your data by access frequency

- Hot (daily access): 10-20% of total data

- Warm (weekly access): 30-40% of total data

- Cold (monthly or less): 40-60% of total data

Step 2: Assign providers by tier

- Hot tier: Cloudflare R2 ($0.015/GB, zero egress)

- Warm tier: Wasabi ($0.0069/GB, zero egress within policy)

- Cold tier: S3 Glacier Instant Retrieval ($0.004/GB, ms access when needed)

- Archive: S3 Glacier Deep Archive ($0.00099/GB, 12-hour retrieval)

Step 3: Automate data movement

Tools like rclone, MinIO, or custom Lambda functions can automate lifecycle transitions based on last-access timestamps. A simple approach:

# Move files not accessed in 30 days from R2 to Wasabi

rclone move r2:hot-bucket wasabi:warm-bucket \

--min-age 30d \

--transfers 32 \

--checkers 16

# Move files not accessed in 90 days from Wasabi to Glacier

rclone move wasabi:warm-bucket s3:archive-bucket \

--min-age 90d \

--s3-storage-class GLACIER_IR

Step 4: Implement a unified access layer

MinIO Gateway or a custom S3-proxy service provides a single endpoint that routes reads to the correct provider based on metadata. This eliminates the operational burden of tracking which files live where.

On-Premises vs. Cloud: Where 500TB TCO Crosses Over

At 500TB, on-premises storage deserves serious consideration. Here is the honest comparison:

| Factor | Cloud (Optimized Multi-Provider) | On-Premises (NetApp/Dell) |

|---|---|---|

| Monthly cost | $5,000-8,000 | $2,000-3,500 (amortized 5yr) |

| Upfront CapEx | $0 | $400,000-800,000 |

| Geographic redundancy | Built-in | Requires second site ($2x cost) |

| Disaster recovery | Included in replication | Separate backup infrastructure |

| Scaling beyond 500TB | Instant, linear cost | Procurement cycle (weeks/months) |

| Staff overhead | Minimal (managed) | 0.5-1 FTE for storage admin |

| Durability guarantee | 11-nines (AWS) | Depends on RAID config and maintenance |

| Time to deploy | Minutes | Weeks to months |

The crossover point: If you have an existing data center with rack space, power, and cooling already paid for, on-premises wins on pure per-GB cost starting around 200-300TB. If you need geographic redundancy, the cloud wins at any scale because replicating a data center is prohibitively expensive.

The hybrid answer: Most enterprises at 500TB+ use a hybrid approach. Primary/hot copies on-premises for performance, with cloud-based DR and cold archives. This captures the low per-GB on-premises cost while getting cloud durability for backups.

Migration Cost: Getting 500TB Into the Cloud

The cost of migrating 500TB into a cloud provider is non-trivial and often overlooked:

| Method | Time | Cost | Best For |

|---|---|---|---|

| Direct internet upload (1 Gbps) | ~46 days | $0 (bandwidth) | Patient teams with stable connectivity |

| AWS Snowball Edge (80TB each) | 7-14 days | $1,800-2,400 (7 devices) | Large datasets where network is bottleneck |

| AWS Snowmobile (100PB) | Weeks | Custom pricing | Petabyte-scale (overkill for 500TB) |

| Google Transfer Appliance | 7-14 days | ~$2,500 | GCS-bound migrations |

| Azure Data Box Heavy (1PB) | 5-10 days | ~$6,000 | Azure-bound migrations |

| Direct Connect/Dedicated Link | 5-10 days | $2,000-5,000/month | Ongoing hybrid connectivity |

For a one-time migration, physical appliances (Snowball, Data Box, Transfer Appliance) are the most cost-effective path. For ongoing hybrid workflows with regular data movement, a dedicated interconnect pays for itself within 2-3 months at this data volume.

The Bottom Line

At 500TB of unstructured data, naive single-provider storage on AWS S3 Standard costs $138,000+/year. An optimized multi-provider tiered strategy costs $36,000-56,000/year for comparable access patterns, a savings of $80,000-100,000 annually.

The optimal approach for most enterprises:

- Classify data by access frequency (hot/warm/cold/archive)

- Assign the cost-optimal provider per tier

- Automate lifecycle transitions with rclone or equivalent tooling

- Implement a unified access layer for operational simplicity

The savings at this scale justify the architectural complexity. A $100,000/year savings pays for a dedicated FinOps engineer focused solely on storage optimization.

Need help designing a cost-optimal storage strategy for your enterprise data? Our cloud cost optimization team specializes in large-scale storage architecture and typically reduces storage TCO by 40-70% within 90 days. We have migrated multiple 500TB+ workloads to optimized multi-provider architectures. Get a free Cloud Waste Assessment to see where your storage spend is leaking.

Further reading: