The Brutal Truth About Cloud Cost Tools Nobody Tells You

Here is a number that should make every engineering leader uncomfortable: the average company discovers a runaway cost spike 18 to 26 days after it starts.

By that point, the damage is done. An autoscaling misconfiguration, a forgotten GPU instance left running over a long weekend, or a Lambda function suddenly processing 10x the expected traffic has already generated a bill nobody budgeted for.

The problem is not that teams lack data. It is that they are looking at the wrong data, from the wrong tools, at the wrong time.

Most cloud cost tools give you yesterday’s information dressed up in a beautiful dashboard. "Real-time" in the industry means anything from 15 minutes to 24 hours delayed. Only a handful of tools actually show you what is happening right now, before that misconfig turns into a $30,000 surprise on your next invoice.

This guide breaks down the 10 best real-time cloud cost optimization tools available in 2026, with the specific strengths, pricing traps, and insider details that most vendor comparison pages leave out. By the end, you will know exactly which tool fits your team, your stack, and your budget, without paying for features you will never use.

Why Your Monthly Bill is Already Too Late

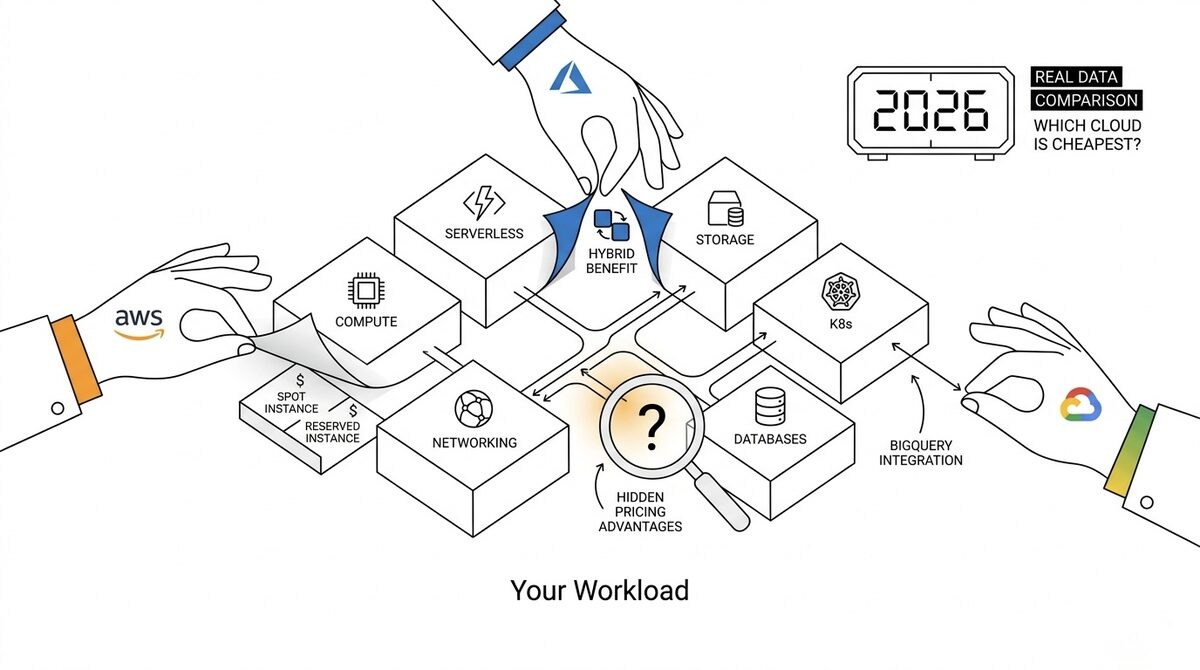

Think about how cloud billing actually works. AWS sends your invoice on the 3rd business day of the following month. Azure closes billing on the last day. GCP calculates credits and committed use discounts retroactively.

That means for a company running $100,000 per month in cloud spend, they are making financial decisions with information that is 30 to 45 days stale. No CFO would accept a bank statement from last month as a current account balance, yet most engineering teams run cloud budgets exactly this way.

Real-time cost monitoring changes the equation. When you can see spend changing by the hour, you can catch problems before they compound:

- A Lambda function with a memory configuration mistake that costs $0.02 per request instead of $0.001 becomes visible in 2 hours, not 2 weeks

- A staging Kubernetes cluster someone forgot to scale down over a bank holiday shows up on Tuesday morning, not in next month’s finance review

- A developer who pushed code triggering 500x more API calls than expected gets a Slack alert before they even close their laptop

The tooling category that makes this possible has matured significantly. Here is what you need to know.

What "Real-Time" Actually Means (And What Vendors Won’t Admit)

Before comparing tools, you need to understand a dirty secret of the industry: "real-time" is a marketing term with no standard definition.

Here is how the major cloud providers actually expose billing data:

- AWS: Cost and Usage Reports (CUR) generate once or three times per day. The hourly granularity option is closer to real-time but still carries a 4 to 8 hour lag.

- Azure: Cost Management API updates roughly every 8 hours. Some services report near-real-time; others have 24-hour delays.

- GCP: Billing data exports to BigQuery with roughly a 1-hour delay for most services, which is the best native latency of the three major clouds.

Any tool claiming true real-time monitoring is either using the cloud provider’s fastest available export or pulling from a different data source like CloudWatch metrics, Azure Monitor, or Cloud Operations Suite. The tools that combine both data sources give you the most accurate picture. Now let us look at which tools actually deliver.

Top 10 Real-Time Cloud Cost Optimization Tools in 2026

1. CloudZero

Best for: SaaS companies that need unit economics, not just aggregate cloud costs

CloudZero is not just a cost dashboard. It is built around a concept called Cost Intelligence, which maps cloud spend to business dimensions like customer, feature, team, and environment. This is fundamentally different from showing you "EC2 costs $45,000 this month." CloudZero tells you that your enterprise customers are each consuming 4x the infrastructure of your SMB customers, which means your pricing model has a margin problem nobody on the finance team could see.

The insider detail most reviews miss: CloudZero requires real engineering effort upfront. You need to instrument your application with custom cost allocation rules, and that process typically takes 2 to 4 weeks. Teams that skip this step end up paying $1,500 to $3,000 per month for a dashboard showing them the same aggregated numbers they could get from native tools for free.

When you do the instrumentation correctly, CloudZero becomes one of the most powerful tools in your FinOps stack, especially if you are a SaaS company that needs to understand cost per customer for accurate pricing decisions.

Pricing signal: Percentage of cloud spend, typically 1 to 2%. Budget accordingly if your cloud bill is $200k or more per month.

2. Kubecost

Best for: Any team running workloads on Kubernetes

Kubernetes billing is invisible by default. Your cloud provider tells you what the nodes cost. It tells you nothing about which namespace, deployment, or team is responsible for that spend. Kubecost fills this gap at the container level.

The most important thing to know about Kubecost: the open-source free tier is genuinely capable. For most teams running fewer than 10 clusters, you do not need the commercial version. The free tier gives you namespace-level cost allocation, right-sizing recommendations, and network cost visibility. That alone has helped engineering teams identify 20 to 40% waste in their clusters without spending a dollar.

The insight that changes how you use it: Kubecost measures CPU and memory requests, not actual usage. If your pods are requesting 4 CPUs but consuming 0.5, Kubecost flags this as idle waste. The fix is in your Helm values or deployment manifests, not in Kubecost itself. The tool identifies the problem precisely. Fixing it requires engineering discipline to lower resource requests across your services.

Kubecost also integrates natively with Prometheus, which most Kubernetes teams already run, so the incremental setup cost is minimal.

External resource: Kubecost open source on GitHub

3. AWS Cost Anomaly Detection

Best for: Any AWS customer who wants zero-cost real-time monitoring today

This is the most underused free tool in AWS. A survey of AWS accounts found that fewer than 15% of teams with Cost Anomaly Detection available have it configured with actionable alerts. Most people turn it on, see the default dashboard once, and forget it exists.

Here is the setup that makes it actually useful: configure monitor subscriptions for your top 5 cost categories (EC2, RDS, Lambda, S3, Data Transfer), set individual thresholds at $100 anomaly impact rather than the default percentage-based alerts, and route everything to an SNS topic that posts to Slack. This takes 30 minutes and is completely free.

The limitation to understand: AWS Cost Anomaly Detection uses ML trained on your historical patterns. For new workloads or teams in their first 30 to 60 days on AWS, the model lacks sufficient history to detect anomalies accurately. You will get either too many false positives or miss real spikes entirely. The tool gets more accurate as your account matures.

It also carries a 4 to 8 hour lag on CUR data. It will not catch a Lambda function that runs for 2 hours and costs $800 in a single burst. But for sustained cost increases over hours and days, it works remarkably well for the price of nothing.

4. Azure Cost Management and Advisor

Best for: Azure-primary workloads, especially enterprise and hybrid environments

Azure Cost Management is free with every Azure subscription, and it is more capable than most Azure customers realize. The feature most teams never find: the "Accumulated costs" view in Cost Analysis. When you toggle this view, it extrapolates your current spend rate forward to give you a projected month-end total. Check this every Monday morning and you will never be surprised by your Azure invoice again.

Azure Advisor layers on top with automated right-sizing recommendations. The insight about Advisor that actually matters: its recommendations are based on 7-day average utilization data, which makes them conservative. An instance with high utilization on 6 of 7 days will not be flagged as oversized. To get tighter optimization, look at 95th percentile utilization instead of average, which requires filtering metrics manually in Azure Monitor.

For hybrid environments where workloads run both on-premises and in Azure, the integration with Azure Arc gives you a unified cost view that no third-party tool can fully replicate yet. If hybrid is your reality, lean into native tools before buying something additional.

5. Google Cloud Cost Management Suite

Best for: GCP-native workloads, data engineering teams, and analytics-heavy organizations

GCP has the best native billing data latency of the three major clouds. When you export billing data to BigQuery (free and takes 10 minutes to set up), you get near-hourly granularity with sub-1-hour lag for most services.

The insight most GCP teams miss: you can build your own cost dashboards in Looker Studio connected to your BigQuery billing export at no cost. GCP provides template dashboards showing spend by service, project, label, and region. This combination (billing export plus Looker Studio) covers 90% of what paid third-party tools offer for GCP workloads, and it costs nothing.

For cost optimization specifically, GCP’s Recommender API surfaces actionable recommendations across Compute, Cloud SQL, and Persistent Disk. Committed Use Discount recommendations are particularly accurate for stable baseline workloads. And GCP’s Sustained Use Discounts, which apply automatically without any commitment on your part, are a built-in advantage most teams do not realize they are already receiving.

6. Datadog Cloud Cost Management

Best for: Teams already paying for Datadog APM or infrastructure monitoring

Datadog’s Cloud Cost Management feature does something no other tool on this list does: it correlates cost spikes with deployment events, performance metrics, and application logs on the same timeline.

When your AWS bill spikes on a Tuesday at 2pm, Datadog shows you that a deployment at 1:47pm introduced a new Lambda function being invoked at 50x the expected rate. You see the cost spike and its root cause in the same interface, not in two separate tools requiring manual correlation.

The important caveat: Datadog Cloud Cost Management is only available on Growth plans and above, with platform pricing starting around $1,500 per month. If you are already a Datadog customer, adding cost management is incremental and often worth it. If you are not already a Datadog customer, this is not the right entry point for cost monitoring alone.

The alert to activate immediately: enable a "Cost Monitor" in Datadog and set it to alert on any service-level cost increase greater than 20% week-over-week. This single alert has caught more costly misconfigurations than any other automated check.

7. Harness Cloud Cost Management

Best for: Teams with significant dev and staging environment waste

Harness has a feature called AutoStopping that deserves its own spotlight. It automatically detects idle cloud resources (VMs, Kubernetes clusters, RDS instances) and stops them after a configurable period of inactivity. When a developer needs the resource again, it starts automatically on access.

For a typical team with 15 developers, dev and staging environments account for 25 to 35% of the total cloud bill. These environments run 24/7 but are actively used maybe 40 hours per week. AutoStopping can reduce dev and staging costs by 50 to 60% with zero workflow disruption, because the environments start on demand in under 60 seconds.

This is the most underrated feature in the entire cloud cost management tooling landscape. Most teams spend months debating which production compute to right-size while their staging environment runs $40,000 per year in idle GPU capacity that nobody is using overnight. Harness solves the problem that costs the most for the least operational complexity.

External resource: Harness Cloud Cost Management docs

8. Spot.io by NetApp

Best for: Teams with stateless, containerized workloads that want automated optimization

Spot.io uses machine learning to predict spot instance interruptions and proactively migrate workloads before AWS, Azure, or GCP reclaims the capacity. The result: teams that would normally avoid spot instances due to reliability concerns can run 60 to 80% of their compute on spot pricing without meaningful operational risk.

The product to focus on is Ocean, which manages Kubernetes node groups. Ocean continuously right-sizes node capacity based on actual pod scheduling requirements, not the static min/max autoscaling groups most teams configure at launch and never revisit. Most teams set autoscaling boundaries once and leave them permanently. Ocean treats node capacity as a live optimization problem that it solves continuously.

The honest limitation: Spot.io delivers the most value for stateless workloads. Databases, stateful applications, and anything that cannot survive a 2-minute interruption window without significant engineering overhead is not a good candidate. Understand your workload profile before evaluating this tool.

9. Apptio Cloudability

Best for: Enterprise organizations with multi-cloud environments and finance chargeback requirements

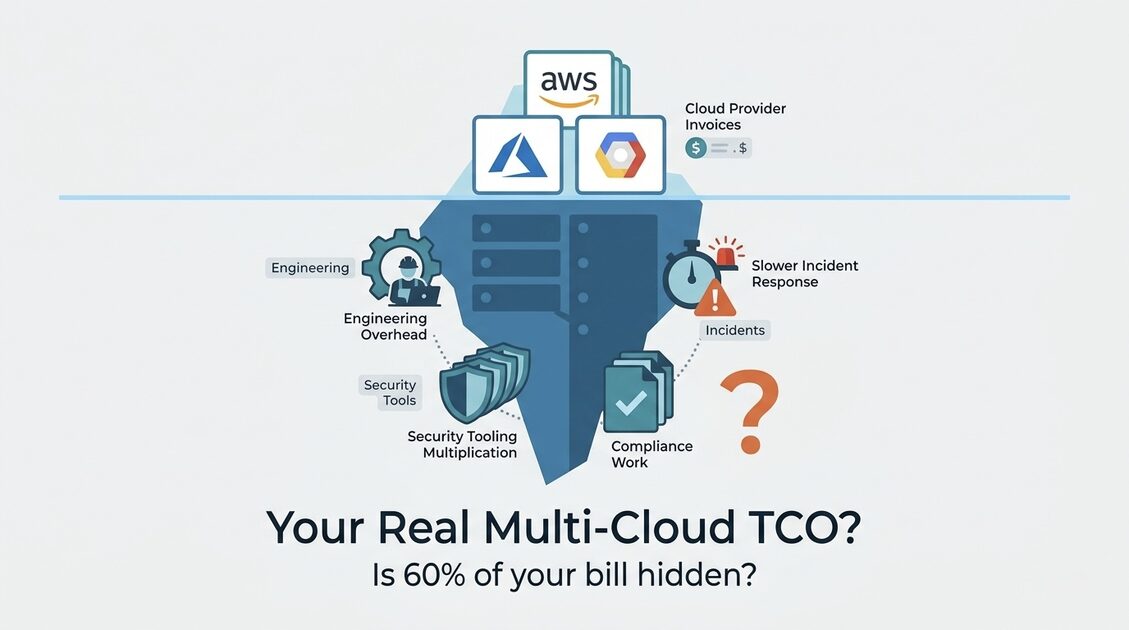

Apptio Cloudability (now part of IBM Apptio following acquisition) is the enterprise-grade choice when you need FinOps governance, chargeback to multiple business units, and executive-level reporting across AWS, Azure, and GCP simultaneously.

The context that helps you evaluate it honestly: Cloudability is designed for the FinOps practitioner role, not the DevOps engineer. It excels at allocation, chargeback, showback, and budget governance across organizational units. The recommendations it surfaces are solid, but the primary value is the financial management layer, not technical optimization depth.

Pricing is in the $50,000 to $150,000 per year range for enterprise deployments. This makes sense for organizations running $5M or more in annual cloud spend where 1% optimization pays for the tool many times over. For teams under $1M in annual cloud spend, the ROI math rarely works.

10. ProsperOps

Best for: AWS-heavy teams who want hands-off Reserved Instance and Savings Plans optimization

AWS pricing has three layers: on-demand (full price), Savings Plans and Reserved Instances (15 to 40% discount with commitment), and Spot (up to 90% discount for interruptible workloads). Most teams manage the middle layer manually and get it consistently wrong.

The reality of managing AWS commitments: teams are either under-committed (leaving 15 to 25% discounts on the table by running too much on-demand) or over-committed (paying for reserved capacity that sits unused after workload changes). ProsperOps continuously rebalances your commitment portfolio using a proprietary algorithm that tracks your fleet changes in near real time.

The pricing model is performance-based: ProsperOps charges a percentage of the savings they generate, typically 10 to 15% of achieved savings. For a team they save $200,000 per year on EC2 costs, the fee is $20,000 to $30,000. The fee structure aligns incentives correctly because ProsperOps only makes money when you save money.

What makes this different from managing Savings Plans yourself: AWS Compute Savings Plans are flexible across instance families, regions, and operating systems, but the optimal coverage percentage changes as your fleet evolves. ProsperOps tracks this dynamically. For teams with variable workloads where manual commitment management is a recurring headache, the automation pays for itself quickly.

How to Choose the Right Tool for Your Situation

The wrong approach to tool selection is downloading trials for all 10 tools and comparing dashboards. The right approach is matching the tool to your primary cost driver and organizational maturity.

| Situation | Recommended Tool | Why |

|---|---|---|

| AWS-first, under $50k/month | AWS Cost Anomaly Detection + ProsperOps | Free monitoring, automated Savings Plans optimization |

| Kubernetes-heavy workloads | Kubecost free tier | Purpose-built namespace visibility, Prometheus integration |

| GCP-primary workloads | BigQuery billing export + Looker Studio | Near-real-time, free, highly customizable |

| Azure workloads | Azure Cost Management + Advisor | Native, free, Hybrid Benefit optimization |

| Dev and staging environment waste | Harness AutoStopping | Idle shutdown automation, fastest ROI |

| SaaS unit economics needed | CloudZero | Per-customer cost visibility |

| Already using Datadog | Datadog CCM | Same platform, correlates cost with performance |

| Multi-cloud, $1M+ annual spend | Apptio Cloudability | Enterprise chargeback and governance |

| Stateless K8s, want spot pricing | Spot.io Ocean | ML-driven spot interruption management |

| AWS commitments feel manual | ProsperOps | Autonomous RI and Savings Plans optimization |

The question most teams skip entirely: what is your actual top cost driver right now? Running Kubecost when 80% of your spend is RDS is not the highest leverage move. Running Cost Anomaly Detection when you have already optimized your Savings Plans coverage is not either. Start with where the money is actually going, then select the tool that gives you the best visibility and control over that specific category.

The Implementation Playbook Most Teams Skip

Tools do not save money. Processes tied to tools save money. Here is the operating rhythm that turns a cost tool into actual savings:

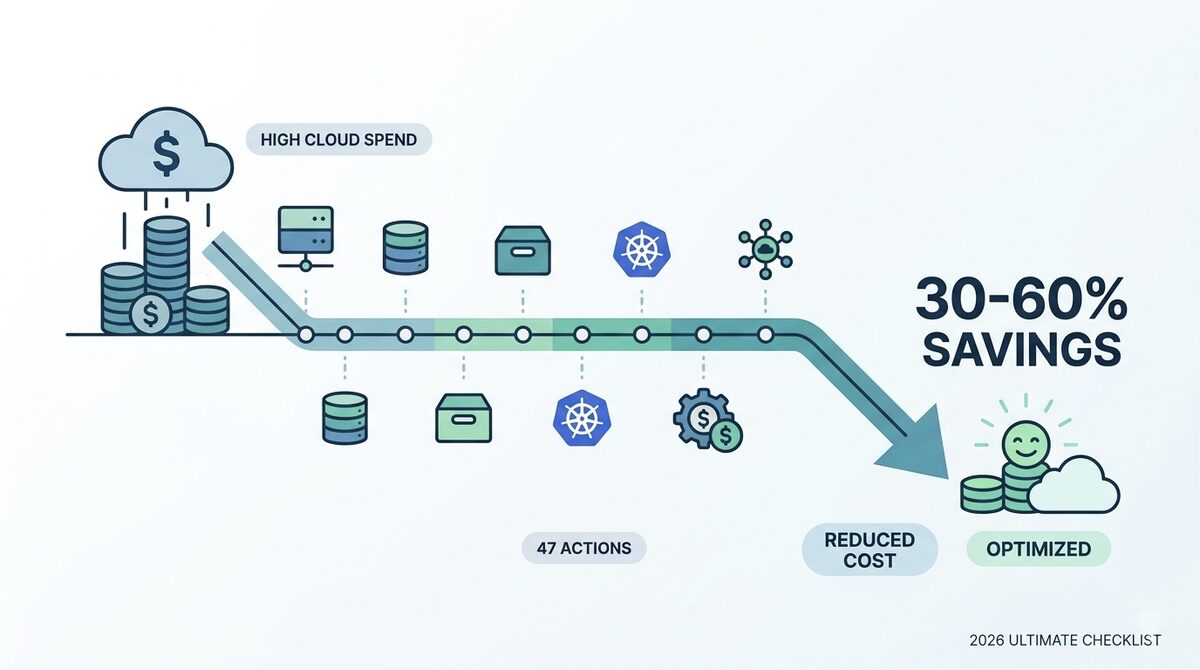

Day 1: Connect all cloud accounts to your chosen tool. Enable billing exports at the highest available granularity. Set up at least one anomaly detection alert with a Slack or Teams integration. Do not optimize anything yet. Just observe.

Week 2: After 7 days of baseline data, identify your top 5 cost categories. For most teams this is compute, storage, data transfer, databases, and managed services. Rank them by spend, not by gut feeling.

Week 3: For your top cost category, request the tool’s right-sizing recommendations. Do not implement all of them. Pick the 3 with the highest savings-to-effort ratio and implement those first. Measure the impact before moving to the next batch.

Month 2: Establish a weekly cost review cadence. Not a monthly finance review. A 30-minute weekly engineering sync where someone shares the cost dashboard and reviews any anomalies from the prior week. Teams that do this consistently cut costs 2 to 3x faster than teams that review monthly because they catch problems while they are still small.

Ongoing: Every sprint, at least one ticket should touch cost optimization. Not as a dedicated project, but as regular engineering hygiene. A right-sizing ticket, a lifecycle policy ticket, an unused resource cleanup ticket. When cost consciousness is embedded in engineering workflow rather than owned by a separate FinOps team, savings compound over time.

For teams that want structured support building this operating model, FinOps consulting can compress a 6-month tooling maturity journey into 6 weeks.

The 3 Mistakes That Drain Value from Every Cost Tool

Mistake 1: Optimizing visibility without ownership

A dashboard nobody acts on costs more than it saves because you are paying for the tool and getting nothing from it. The most important configuration in any cost tool is not the dashboard layout. It is the alert routing. Every anomaly alert should go to a specific Slack channel with a specific person on-call for it. "The engineering team gets the alert" is not an owner. "Sarah owns the AWS cost anomaly alert on Tuesdays" is an owner.

Mistake 2: Chasing lower unit prices instead of eliminating waste

Switching from gp2 to gp3 EBS volumes saves 20%. But deleting the 400 GB snapshot from a server you decommissioned 8 months ago is free, immediate, and requires no ongoing commitment. The highest-leverage optimization is almost always elimination, not price negotiation. Audit for unused resources before tuning active ones. The ghost resources are hiding in plain sight.

Mistake 3: Implementing all recommendations at once

Cost optimization tools surface dozens of right-sizing recommendations. Implementing all of them simultaneously on production creates operational risk without proportional reward. Use a tiered approach: start with non-production environments, measure the impact, then apply the same changes to production with confidence. The batch approach feels slower but actually delivers faster results because it avoids the rollbacks that set teams back weeks.

Turning Visibility Into Actual Savings

The cloud cost tool market is crowded, and vendor websites are universally optimistic about what their platforms can do. The practical reality is simpler: the best tool is the one your team will actually use consistently.

For teams under $100k per month in cloud spend, the free native tools (AWS Cost Anomaly Detection, Azure Cost Management, GCP BigQuery export) combined with Kubecost for Kubernetes workloads cover the vast majority of needs. Invest the budget you would have spent on a paid tool into the optimization work itself.

For teams above $200k per month, the unit economics of paid platforms start working in your favor. CloudZero, Datadog CCM, or Spot.io can each return 10x their cost when deployed and operated correctly.

The real work is not the tooling selection. It is building the organizational habits that turn visibility into action: weekly reviews, owned alerts, cost tickets in every sprint, and engineering leads who treat cloud spend as part of their delivery metrics rather than someone else’s problem.

For teams that want to accelerate this process with structured FinOps governance and expert support, the cloud operations and FinOps consulting services from LeanOps are designed to compress a 6-month tooling maturity journey into 6 weeks.

The cloud bill is a direct reflection of your engineering decisions. The right tools make those decisions visible in real time. What you do with that visibility is where the real savings come from.

For deeper dives into specific optimization areas, explore the AWS cost optimization playbook and the Kubernetes cost optimization guide.

External resources: FinOps Foundation open source tools and services list and the CNCF Cloud Native Landscape for broader infrastructure tooling context.